Meta has disclosed updated figures on its global efforts to curb scam activity across its platforms and outlined new detection tools designed to alert users to suspicious behavior. According to the company, more than 159 million scam ads were removed in 2025, and 10.9 million Facebook and Instagram accounts linked to scam operations were taken down.

The company says it is deploying new machine‑learning systems across Facebook, Instagram, and WhatsApp to identify patterns associated with fraudulent activity. These AI systems analyze combinations of text, image content, and contextual signals that Meta states are harder for traditional filters to catch, including attempts to impersonate public figures, brands, or redirect users to fraudulent websites.

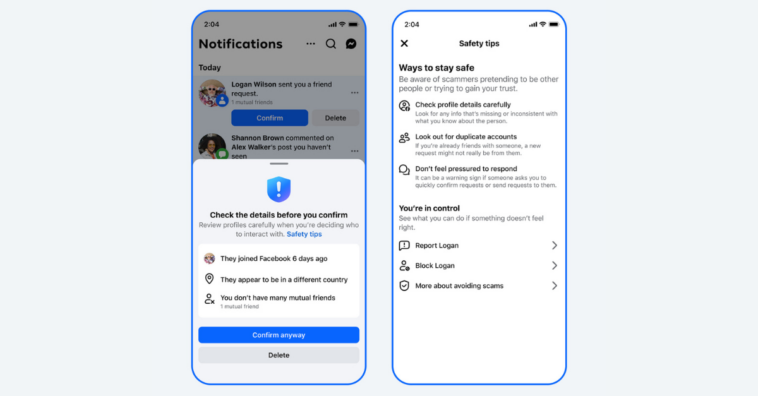

Meta also indicates it is broadening the scope of its tools beyond outright removals. Instead, users will begin seeing warnings when certain risky patterns are detected even if a profile or message has not been confirmed as a scam. These warnings aim to give users additional context before taking action.

Across its family of apps, Meta outlined three primary warning features:

- Facebook Friend Request Alerts: Users may see notifications when a friend request originates from an account with few mutual connections, a recent join date, or indications it is based in another country — traits Meta associates with cloned or deceptive profiles.

- WhatsApp Device Linking Notices: WhatsApp will now highlight attempts to link a user’s account to a new device by showing the geographic origin of the request and clarifying the access granted, a response to scams that attempt to hijack accounts through social engineering.

- Messenger Suspicion Flags: In certain regions, Messenger will flag common scam indicators in new conversations—such as suspicious job offers—and offer users the option to send recent chat content for an AI‑driven review, followed by suggested next steps including blocking or reporting.

Meta said it continues to coordinate with law enforcement and industry peers to disrupt cross‑border scam networks. Recent operations included participation with global agencies, resulting in the disabling of more than 150,000 accounts related to organized scam networks and the arrest of 21 suspects by the Royal Thai Police. Meta also cited cooperation with Nigerian and UK authorities that led to arrests tied to alleged cryptocurrency impersonation schemes.

These enforcement efforts build on previous joint actions, such as disruption operations that led to the removal of tens of thousands of pages and accounts tied to money laundering and recruitment fraud.

To address fraudulent activity from the advertising side, Meta said it is expanding its advertiser verification requirements, particularly for higher‑risk categories. The goal is for verified advertisers to account for 90% of ad revenue by the end of 2026, up from 70% currently, with verification intended to reduce attempts to misrepresent identities in paid ads.

Meta outlined ongoing global awareness initiatives designed to educate users about common scams. Partnerships include campaigns in Southeast Asia and South Asia in collaboration with government and international organizations, as well as joint efforts with consumer protection agencies in Latin America during cybersecurity awareness events.

Meta described scam tactics as continually evolving, with criminal actors adapting techniques to bypass platform defenses. The company’s removal figures suggest a high volume of fake content is still generated and distributed before detection. The expanded warnings and AI‑based systems are part of Meta’s stated strategy to shift from reactive removals toward proactive user alerts and broader collaboration with authorities.

Meta says it will continue monitoring scam trends, enforcing policies across its services, and updating users and partners on progress in addressing digital fraud.

Comments

Loading…